So I’ve been thinking about algorithms a lot recently. Let’s talk about what they can tell us about our own biases and what we need to do with that information. With cameos from Garfield, AC/DC, and Harry Potter.

You may be aware, but predictive text had a bit of a moment in late 2017/early 2018. It largely stemmed from this absolute masterpiece of a predictive-text Harry Potter chapter that had the perfect blend of absurdity and coherence. I could talk for a while about the way this captured the public imagination, but I’ll just dump my favorite Botnik creation here and move on.

Botnik, interestingly, was not truly automated predictive text; part of its coherence came from the user being able to control what amounted to a predictive text keyboard. This allowed people to avoid the truly incomprehensible stretches that you still see in other bot-created text. And of course, many of the imitators that are out there, while still uproariously funny, are actually human-created and don’t use any machine inputs at all.

But there is still plenty of truly predictive text out there, and it’s getting better. Markov chains are one method of creating text that can be just coherent enough to provide entertainment value. They’re not new, and the term actually refers to the statistical process used to create the text, so they actually have much broader use.

Still, Markov chains have been in use for meme purposes for years. During the height of the Garfield-meme era, and in the shadow of things like Garfield Minus Garfield and Lasagna Cat, one of my favorites was Garkov, created in 2008.

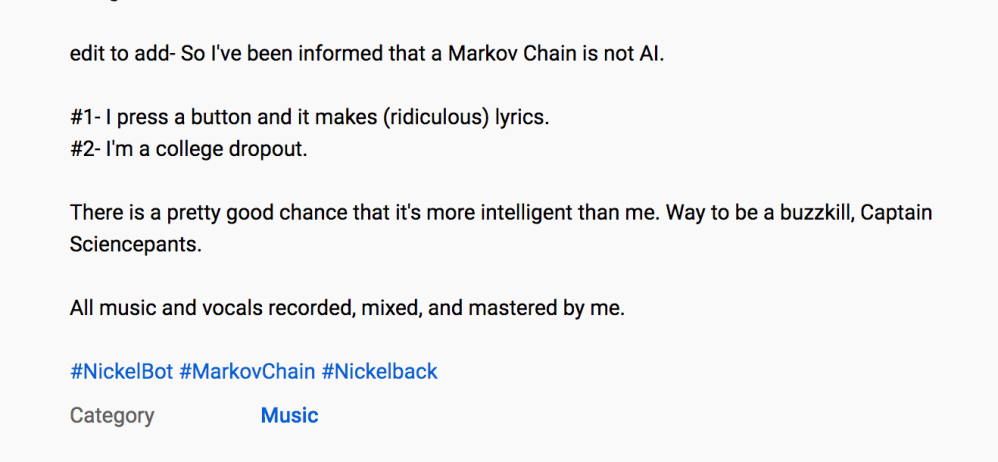

This brings us to the algorithm I want to discuss. A Youtuber is using Markov chains to write songs in the style of various bands. The first entry in this was a Nickelback parody, Nobody Died Every Single Day. I don’t really know anything about Nickelback other than relevant and important memes. But Nobody Died Every Single Day is basically fine, as these kinds of projects go.

The next entry, though, was an AC/DC project that produced some fascinating results. I was truly not ready for it.

I think it’s important to note at this stage that I have no reason to doubt that the writing process for these is highly reliant on the Markov chain that the artist built. They are at the higher end of coherence for predictive text projects, but this explanation in the Nickelback description seems to indicate that the algorithm is doing a good bit of work.

Anyway, on to AC/DC. The song is called Great Balls. And, well…not only is it mostly coherent, it’s coherently transphobic.

You may think I’m exaggerating here, but here’s the end of Verse 1 along with the chorus:

You fool around, you women,

With too many pills, yeah

She gotGreat balls

And big balls

Too many women with the balls

Seems like a bone given’ the balls

A whole lotta woman ‘cause I’m a ball

The obvious parallels here are to AC/DC’s songs She’s Got Balls and Big Balls. The former is more directly relevant, but its lyrics are simply about an assertive woman (with some trans women on the internet claiming the song as their own affirmation). The latter is more direct, but even it is a double entendre for a high-class party. And perhaps it’s the limits of the algorithm that any nuance and cleverness present in the original songs is completely bulldozed here. But I think there’s more going on here.

I’ve been turning this over in my head for over two weeks now. What do we even do with this? There are three takeaways that I can come up with, although I’m sure smarter people than me can make more sense of this.

First: If we assume that this is largely algorithm-reliant, I think it shows just how our language both draws from and also reinforces the biases that we have. This isn’t a revelation of any secret transphobia, but it’s illustrative of the fact that common language wasn’t more inclusive.

And this matters because I think the extent of trans visibility in mainstream culture was through tongue-in-cheek songs like this, if not worse, until quite recently. Ace Ventura literally came out during Althea Garrison’s tenure as the first trans state legislator in the United States. Garrison, who served on the Boston City Council in 2019, has become more conservative over the years and campaigned in 2019 as an open Trump supporter. But in the 25 intervening years, we’ve done some work on making the language we use more inclusive.

There is still work to do. And that work does matter.

Second: I think this is a clear illustration that even objective algorithms are going to have biased outputs if the inputs are biased.

And third: Those inputs do not need to be intentionally biased to create a problematic output.

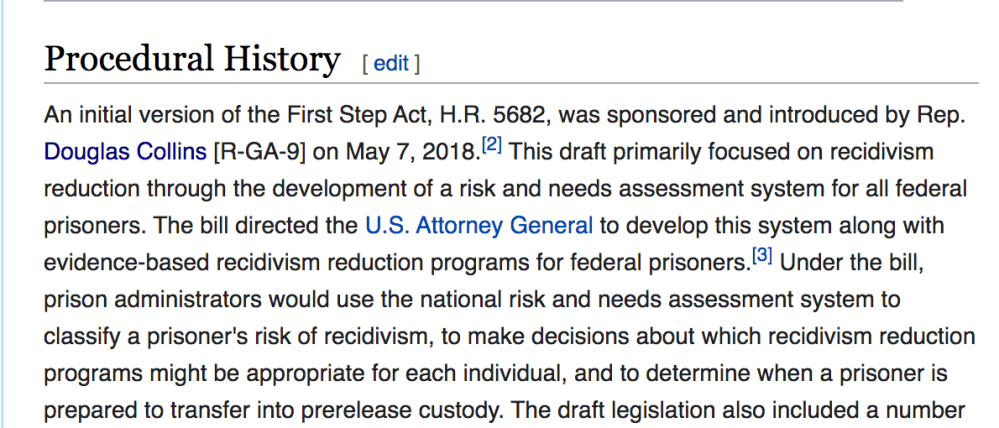

Together, these two implicate a lot of recent and current policymaking. Take the First Step Act, which was passed as a collection of really good tweaks to federal criminal law (although fundamental reforms would have been better). The act actually started as a Republican proposal to make determinations about the release risk of various inmates through algorithms.

Democrats didn’t sign on in large numbers until the bill included some desperately needed sentencing reforms. But the algorithm, which was named the PATTERN tool, survived.

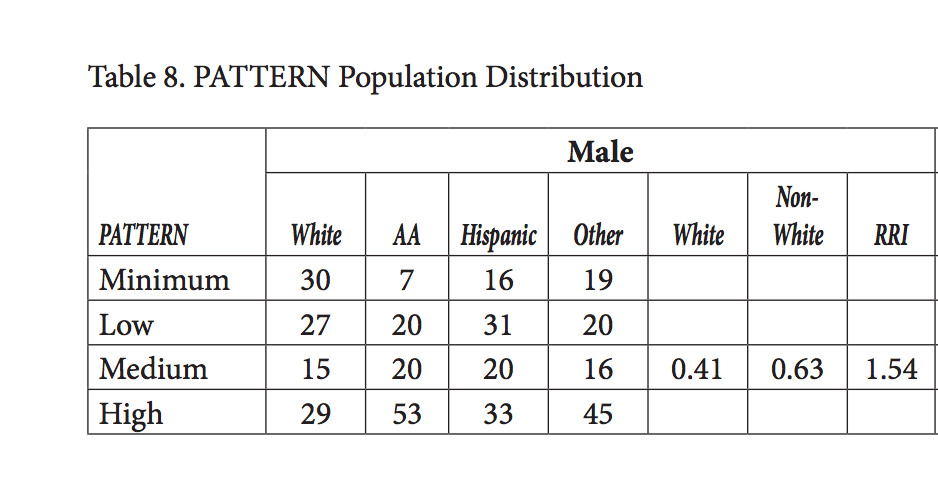

PATTERN is used to for sentence reductions, for prison assignments, and more. The process of calculating a PATTERN score was discussed in an NIJ report, and, well, it’s concerning. The tool uses a bunch of different personal factors for each inmate, many of which correlate with socioeconomic status and race. After accounting for all of those factors, the tool places every inmate in one of four risk level categories. And lo and behold, the PATTERN scores show a stunning racial disparity.

This is the baseline we’re currently using for things like eligibility for COVID-19 compassionate release.

This is the baseline we’re currently using for things like eligibility for COVID-19 compassionate release.

Calls for reforming PATTERN have followed. But I wonder if removing bias is even possible when the fundamental question of recidivism, “did you crime again,” is really “did police find out you crimed again.” Biased enforcement seems almost impossible to control for.

I listened to a talk recently about the gap between explainable AI and ethical AI. Understanding that seems particularly relevant here, even in this simple and otherwise innocuous musical setting. As the First Step Act shows, we don’t have to imagine what the problems are when lives might be affected.

I’m still interested in future songs from this Markov chain project. And, admittedly, Great Balls is unreasonably catchy. But it’s a reminder that most supposedly “objective” mechanisms serve to perpetuate, rather than remove, bias.

Our understanding, and our oversight, needs to be crafted accordingly.